Oracle

Unix

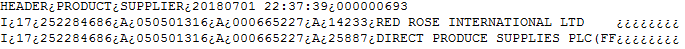

data.bcp

vim script.sh

!/bin/sh

rm -rf mapping.bcp

iconv -f ISO-8859-14 PRODUCT_SUPPLIER.bcp -t UTF-8 -o mapping.bcp

export NLS_LANG=AMERICAN_AMERICA.WE8ISO8859P1

sqlldr EXA_TUKMSTRP/quality1@exa_gbtukprdd_an control=mapping.ctl log=mapping1.log skip=1

$ chmod +x script.sh

mapping.ctl

load data

infile 'mapping.bcp'

replace

into table temp_del1

fields terminated by "?" OPTIONALLY ENCLOSED BY '"'

trailing nullcols

(

COL1 CHAR(200),

COL2 CHAR(200),

COL3 CHAR(200)

)

$ . script.sh

create table temp_del1(

COL1 varchar(200),

COL2 varchar(200),

COL3 varchar(200)

);

Windows

Convert file to UTF-8

iconv -f ISO-8859-14 CM_PRODUCT_SUPPLIER_000000693.bcp -t UTF-8 -o mapping.bcp

script.bat

sqlldr EXA_TUKMSTRP/quality1@exa_gbtukprdd_an CONTROL=test1.ctl LOG=test1.log BAD=test1.bad skip=1

pause

mapping.ctl

load data

CHARACTERSET WE8ISO8859P1

infile 'temp1.bcp'

replace

into table temp_del1

fields terminated by "?" OPTIONALLY ENCLOSED BY '"'

trailing nullcols

(

COL1 CHAR(200),

COL2 CHAR(200),

COL3 CHAR(200)

)

Python

from sqlalchemy import *

import csv

username = "prakriti"

password = "password"

database_instance = "exadata123"

conn_str = 'oracle://%s:%s@%s' % (username, password, database_instance)

engine = create_engine(conn_str)

CONN = engine.connect()

CONN.execute("Alter session enable parallel dml")

def utf_8_encoder(unicode_csv_data):

for line in unicode_csv_data:

yield line.decode('iso-8859-1').encode('utf-8')

ch = "\xbf".decode("iso-8859-1").encode('utf-8')

i = 1

with open('mapping.dat', 'rb') as csvfile:

spamreader = csv.reader(utf_8_encoder(csvfile))

for row in spamreader:

str1 = ''.join([word.replace('\xbf',',') for word in row])

str1 = ''.join([word.replace(ch,',') for word in row])

str2 = str1.replace("\'"," ")

str3 = ''.join("'" + str(s) + "'," for s in str2.split(",")).strip(",")

if i <> 1:

query = "insert into temp values("+str3+")"

CONN.execute(query)

i = i + 1